· Casper van Elteren · Philosophy · 5 min read

Are We in the Chinese Room?

AI may not only simulate understanding; it may also tempt us into workflows where we produce competent outputs without preserving understanding ourselves.

It is difficult to escape the news cycle on AI. Open any news app and it is there: another story about replacement, acceleration, alignment, or risk. Much of the discussion focuses on whether AI will replace us, how quickly the revolution is unfolding, and whether we should slow it down or ensure that these systems remain aligned with human values. I worry about something slightly different. I am less afraid that AI will replace us than that we may become like the man in the Chinese Room: passing on symbols in response to inputs while increasingly losing contact with their meaning.

What I mean by the Chinese Room

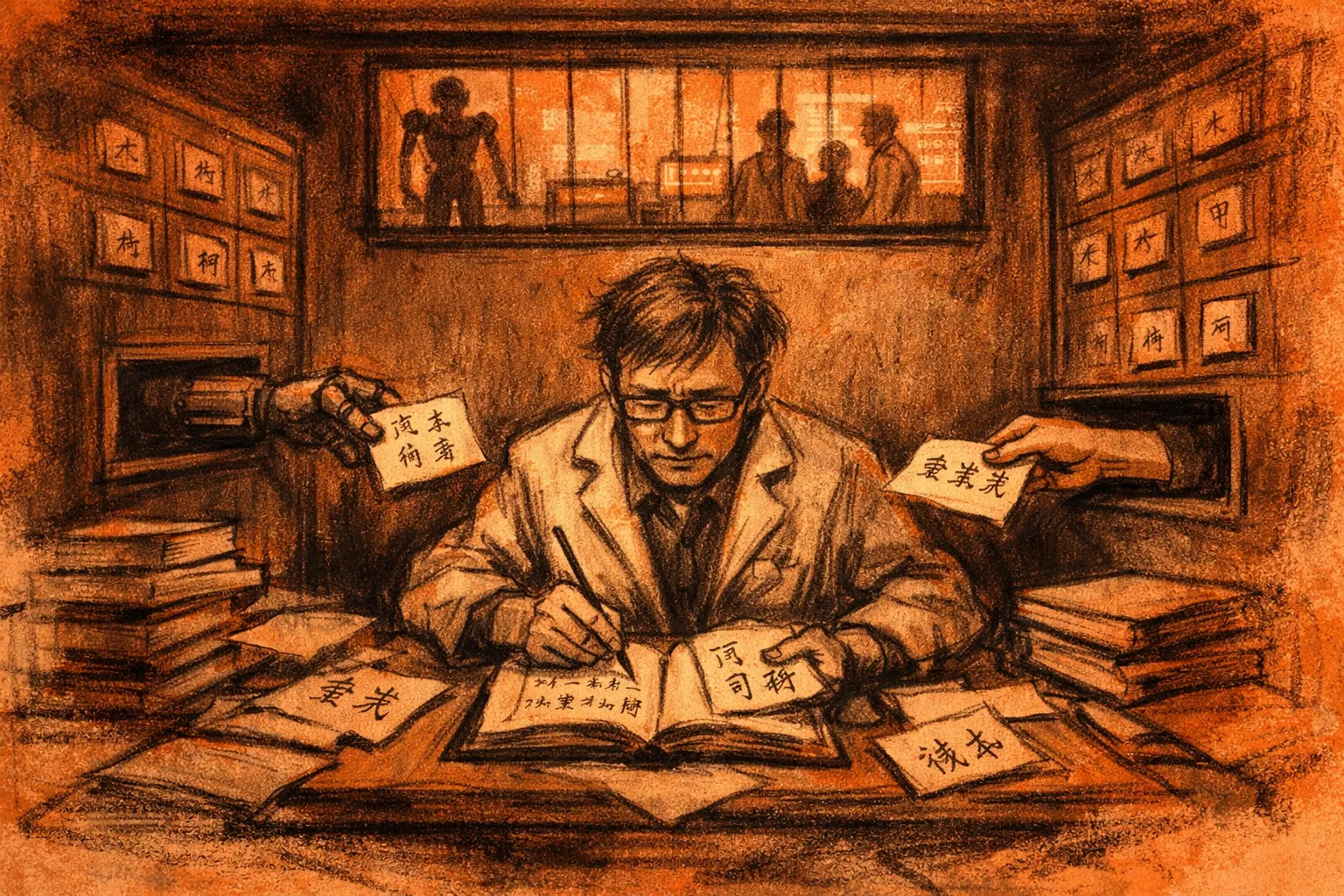

The Chinese Room is a thought experiment introduced by John Searle 1 . It concerns the question of whether machines can think or understand. In the thought experiment, a man sits inside a room. Each day, he receives a note written in Chinese. Before entering the room, he was given a rulebook that tells him how to manipulate the symbols on the page and produce a corresponding output, which he then passes back through the same slot. From the outside, the room appears to understand Chinese. It can respond coherently and appropriately to whatever is sent in. But from the perspective of the man inside, there is no understanding at all. He is not reading Chinese; he is only following rules. Searle’s claim was not merely that computers are complicated rule-followers, but that formal symbol manipulation by itself does not amount to understanding. Syntax, on this view, is not yet semantics.

Searle’s question was simple: does the man in the room understand Chinese? If not, then does the room as a whole understand it? The point of the argument is that outwardly correct performance is not obviously the same thing as genuine understanding.

A stylized depiction of Searle’s Chinese Room: a system may produce coherent outputs by following formal rules without thereby understanding the meaning of the symbols it manipulates.

My concern is that, in some contexts, we are at risk of becoming the man in the Chinese Room. AI gives us a similar kind of machinery. We provide a prompt, the system processes it, and it returns an output. We can use that output to improve our writing, draft emails, polish reports, generate code, and even produce material that resembles scientific insight. But the uncomfortable question is whether, in doing so, we preserve understanding or gradually give it away.

What is meaning?

AI changes the level at which we interact with problems. Instead of spending time wrestling directly with writing, coding, mathematics, or sustained thought, more of that internal processing is delegated to the machine. This can be immensely useful. It removes friction, speeds up production, and allows us to focus more on higher-level conceptual work. But it also carries a risk: we may become operators of a system whose outputs we can use effectively without fully understanding how they were produced or whether they deserve our trust.

The problem creeps in exactly at this gap. I think there because there is something-it-is-like to solve a problem in your own mind: to wrestle with it, to feel confused by it, and then to suddenly get it. That flash of insight matters. It is not merely a romantic attachment to difficulty. It is part of what anchors understanding. When we bypass too much of that process, we may still produce useful outputs, but we risk losing contact with the meaning that made those outputs valuable in the first place.

This matters especially in science and scholarship. The problem is not simply that AI can produce text, code, or arguments for us. The deeper problem is that it may allow us to stop doing the kinds of thinking required to evaluate whether those outputs are correct. Knowledge generated at great speed is still fragile if we can no longer reliably check it. In that case, the outputs of AI begin to stand on their own, while we drift into the role of translators or relays between systems we no longer fully understand.

It is, of course, convenient not to think too hard about how to phrase every email or how to structure every report. AI smooths rough edges and helps work flow more easily. There is real value in that. The aim, then, is not to reject AI, but to avoid becoming the man in the room. To do that, we must remain able to check the output, question the process, and understand enough of the underlying language of the problem to know what the system is doing. If meaning is to remain human, then we must still be able, in some meaningful sense, to read the Chinese ourselves. Otherwise, we risk drifting into a world in which messages pass endlessly between humans and machines, while fewer and fewer of us understand what is actually being said.

Source Notes

References

- 1.

John R. Searle (1980). Minds, Brains, and Programs . Behavioral and Brain Sciences . Link