· Casper van Elteren · Statistics · 13 min read

When Does It Break? An Introduction to Hazard Analysis

The logit models whether something happens within a window. Hazard analysis opens that window and asks when the event occurs.

One of the things I enjoy most about mathematics is that the same formal idea can keep reappearing in places that, at first glance, seem unrelated. A compact expression shows up in one problem, solves it elegantly, and then later turns out to be useful somewhere else entirely. That kind of migration is part of what makes the subject feel alive to me.

In the previous post on the logistic and logit functions, I followed one such path. The story began with population growth and resource limits, and then moved into probability models where the main question was whether an event happens within a fixed window.

This post continues that story, but it changes the question slightly. Instead of asking whether an event happens, we ask when it happens. That shift may sound small, but it changes the mathematics in an important way. Once time itself becomes part of the object we want to model, we need a language that keeps track not just of risk, but of how that risk unfolds as the system changes.

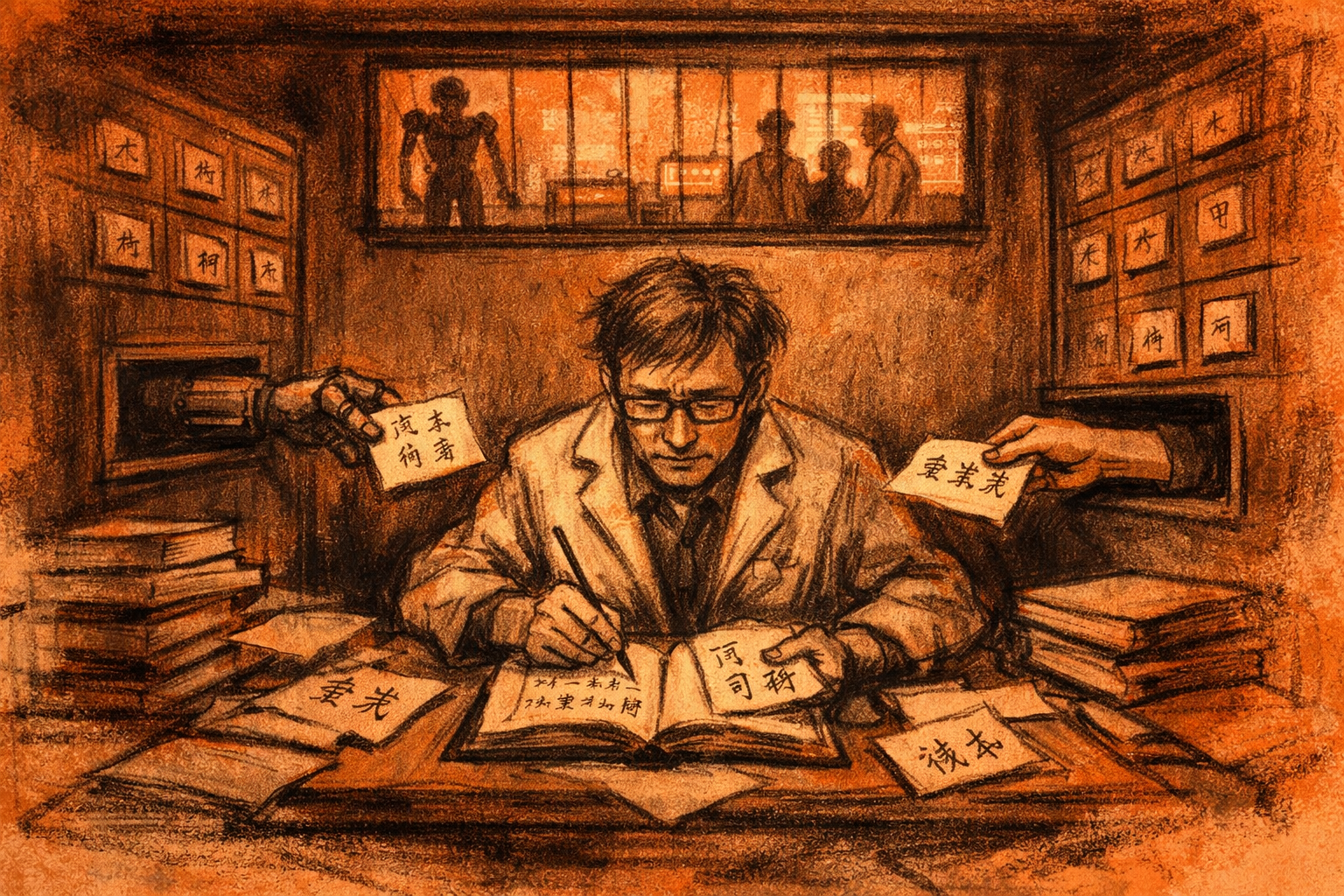

Into the forest we go!

Imagine you are a forest warden responsible for a local woodland full of squirrels, deer, raccoons, and other wildlife. Sitting in a fire watch above a quiet lake, you write down a simple question: given occasional lightning strikes, how likely is it that the forest catches fire?

How should we study a problem like this? My first instinct is usually to ask for a probability: what is the chance the forest burns this season? But the longer I sit with that question, the less satisfying it becomes. A single probability hides almost everything interesting.

One obvious factor is the rate at which lightning strikes occur. If lightning is frequent, then the chance that some strike eventually ignites a tree is higher. But lightning alone is not enough. Conditions also matter: the air may be wet or dry, leaves may be damp or brittle, and fuel may or may not be available.

That last point already tells us something important. For a fire to start, there must first be trees to burn. The state of the forest therefore depends not only on lightning, but also on the rate at which trees grow, die, and recover after disturbance.

Once we start thinking this way, the problem changes shape. The forest is not a static object with a single fire probability attached to it. It is a system driven by events unfolding through time: trees grow, lightning strikes, fires spread, and burning trees burn out. What we really need is not just a probability, but a way of describing how the system’s internal clock is being pushed around by those events.

Defining the Hazard object

A logit model maps an unrestricted predictor into a probability in

That is useful, but it also compresses the whole temporal story into one number. A fire probability of

We call this object the hazard; the hazard is the instantaneous event rate, conditional on the event not having happened yet. More informally, it tells us how fast the clock toward the event is running at a particular moment. Formally, we write

This definition is worth reading slowly. We look at a very small time interval

A high hazard means the event is likely to happen soon, given that it has not happened already. In the forest setting, the hazard would be low after heavy rain, higher during a dry spell, and perhaps higher still when dry fuel and lightning coincide.

If the hazard tells us how quickly the event is threatening to occur, then the natural companion question is: what is the probability that the event has still not occurred by time

where

In that sense, hazard analysis takes the bounded-probability story from the previous post and adds a clock to it. The logit asks how predictors map to risk over a fixed window. Once that window is chosen, the answer is a single number: the probability that the event occurs by the end of the interval.

Hazard analysis works one level underneath that summary. Instead of asking only for the final probability, it asks how quickly the system is moving toward the event at each moment. That is what the language of rate is meant to capture. A probability answers “how much chance is there by time

This difference matters because the same overall probability can hide very different temporal stories. A forest might have a moderate chance of burning this season because danger is steadily present throughout, or because the risk is low for months and then spikes during a brief dry period with frequent lightning. A single summary probability does not distinguish those cases. A hazard function does.

So the hazard can be read as the local speed of the event clock, while the survival function records how much event-free probability remains as that clock keeps running. The logit gives us a bounded summary of risk. The hazard and survival functions let us see how that risk accumulates, changes, and reveals itself through time.

Up to this point, I have spoken as if there were a single event time

Defining the System

We can now make the system more explicit. To describe the forest mathematically, we need two ingredients: a description of the current state, and a list of the event channels that can change that state.

Let

- empty

- occupied by a tree

- burning

From the current state

To stay consistent with the logic above, we should define each event channel in the same way we defined the hazard: as a probability over a very short interval, divided by the interval length, and then taken in the limit as the interval shrinks to zero.

So for an event channel

This says: once the system is in state

Only after writing the rates this way do we choose a simpler model for what the functions

With that simplification, a natural choice is

where

where

where

where

Note that although the system evolves through time, this formulation is memoryless in the Markov sense: once the current state

These formulas are therefore not a new idea. They are the simplified concrete version of the same hazard logic as before. For example, if one empty cell has growth rate

The total rate is then

This quantity is the total clock speed of the system. When

Turning the Formalism into a Simulator

So far, we have reduced a complicated system to its essential ingredients: discrete event types, each with its own rate. The next step is to turn that description into a simulator. In the forest example, those events are things like tree growth, lightning strikes, fire spread, and burnout. The Gillespie algorithm gives us a way to do exactly this, but it helps to first see why the waiting time to the next event takes the form it does.

Let

is the probability that no event has happened by time

Now ask a slightly more local question: what is the probability that no event has happened by the slightly later time

We can rewrite this using conditional probability:

This decomposition is the key step. It says that surviving until time

Now we use the fact that, once the current state

In words: conditional on no event having happened yet, the probability that some event occurs in the next tiny interval is approximately the total rate times the interval length.

The complementary probability is therefore

Substituting this into the previous identity gives

Rearranging,

Dividing by

This is a first-order linear differential equation, and its solution is

So, conditional on the current state

This gives a clear interpretation of the total rate. If

Since the survival function is

the waiting time

This tells us how to sample the time until the next event. Because the system does not change between events, the rates remain fixed during that waiting period. If

Solving for

Since

So the logarithm appears because we are inverting the exponential cumulative distribution function. Once the waiting time has been sampled, we choose which event occurs using probabilities proportional to the individual event rates:

In practice, this is done by drawing another uniform random number and comparing it to the cumulative normalized rates. After that, we update the system state, recompute the rates, and repeat the procedure. This is the Gillespie algorithm: a direct simulator of competing hazards in continuous time.

From Logistic to Hazard

The move from logit to hazard is a move from outcome to process. A logit can tell us the chance that something happens by the end of a window. A hazard asks how the event is being approached moment by moment, given that it has not happened yet. That may sound like a small change in emphasis, but it leads to a very different way of thinking. Once events are governed by rates, time is no longer hidden inside a summary probability. It becomes part of the model itself. And from there, simulation is not an extra trick added at the end, but a natural extension of the same idea.

Interactive simulator

This embedded model shows the Gillespie dynamics directly: total hazard controls the waiting time, normalized rates control which event happens next, and the heatmap highlights local ignition risk. The simulator is written in Rust, compiled to WebAssembly, and embedded here as a standalone canvas app.